自然語言材料的雙語研究/Bilingualism

In the modern society, to attain a foreign language and to communicate by using it fluently becomes more and more important. Thus, to improve the learning efficiently of a new language is one essential research direction. Following the normal development, the human brain masters the native language (L1) since childhood, therefore, learning a second language (L2) after puberty with its properties differed from the L1, must result in a tuning of the language network in the brain.

Our research has focused on the cognitive processes involved in spoken language comprehension in L1 and L2, attempting to demonstrate the neural signature of the L2 proficiency. The study went through two phases, first, the subjects evaluation and recruitment and, second, the functional magnetic resonant imaging (fMRI) experiment. In the subject evaluation and recruitment phase, a series of behavioral tests were included to probe one’s L2 proficiency level (e.g., the vocabulary size, the level of paragraph comprehension, the self-evaluation of L2 level, the amount of exposure on L2 in daily life, etc.). In the fMRI experiment, the participants’ underwent two tasks: (1) a classic task-based sentence comprehension task in which participants listened to sentences in L1, L2 and a language they did not understand, and (2) a story comprehension task in which participants listened to continuous story in L1 and L2 as in the natural context. The sentence comprehension task allowed us to contrast the level of the blood oxygen level-dependent (BOLD) signal among L1, L2, the unknown language, and rest condition. By doing so, the language network of L1 and L2 will be identified. Moreover, the contrast between different levels of L2 proficiency group provided the opportunity to identify the neural representation of L2 proficiency. On the other hand, in the story comprehension task, brain activities at each location of the brain of any two pairs of participants were taken and to compute the level of synchronization. By repeating this procedure among all locations and between all possible pairs, the novel method offered an opportunity to inspect language processing in a natural context which may involve processes of larger time-scale (e.g. integration).

配戴人工電子耳先天聽損者在中文聲調聽知覺/Hearing loss

本研究探討人工電子耳 (cochlear implant, CI) 成人使用者的中文聲調聽知覺表徵。

實驗一

利用聲調辨識與區辨作業檢驗成人電子耳使用者的聲調類別知覺表現,並搭配單音節與句子之聽辨測驗,分析聲調知覺表現與單音節、句子的關係。 結果顯示,電子耳使用者在單音節和句子之聽辨能力與聲調類別知覺能力有正相關。

實驗二

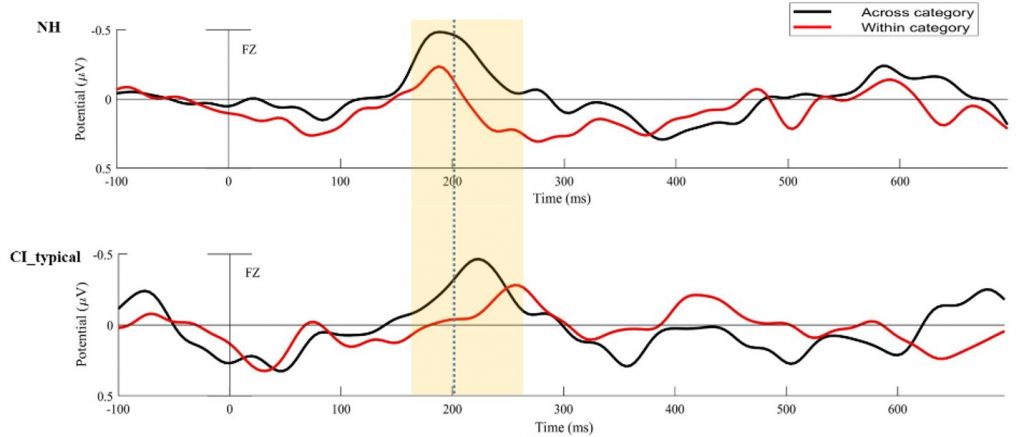

利用不匹配負向神經電生理典範 (mismatch negativity paradigm, MMN),以聲調的類別間和類別內單音節刺激為材料,驗證透過電子耳所建立之聲調表徵的神經生理基礎。結果顯示,相較於聽力正常組,電子耳使用者的不匹配負向波的峰值潛時 (peak latency) 比較長,推測這組電子耳成人使用者雖然有典型的聲調類別知覺表徵,但他們中樞神經系統仍需要較長的時間去偵測和整合聲學訊息。

未來研究將持續關注電子耳使用者具典型與非典型聲調類別知覺間神經電生理指標的發展與差異,希望能完整描繪聽損者中文聲調表徵,以利發展重新建構。

幽默感的神經機制/Humor

幽默感在不僅可加速人際互動中社會訊息的溝通,更可化解許多突發的尷尬。雖然目前已經發現,幽默感的基本運作模式為透過“期望”與”不協調”所產生的驚訝 ,且需要透過左、 右半腦相互作用才能使人真正明白笑點。但大腦語言機制如何分析、理解語意中的幽默仍尚未釐清。

本團隊將利用八組中文雙人對話(相聲以及訪談),建構不同生理訊號庫,包含腦波圖(electroencephalogram)、腦磁圖(Magnetoencephalography)以及功能性磁共振成像(FMRI,functional magnetic resonance imaging, FMRI)。

藉由分析自然對話中幽默內容的語言處理、解構出相對應的空間與時間上的大腦動態關係,此研究將有助於進一步理解人類語言互動的運作模式。